The ability to work effectively and effortlessly with a team of highly skilled colleagues is a decisive competitive advantage that leads to success. This is true on a basketball court or soccer field, but equally true and critical in a corporate environment, a movie special effect studio, or an architectural design firm. Due to COVID-19, today’s workforce is no longer centralized in a single location. It is very likely that your coworkers or business partners are spread across the country, if not across the world, so the ability of stakeholders to collaborate effectively is now a MUST HAVE for any corporation today.

NVIDIA Omniverse™ is an open platform built for virtual 3D design collaboration and real-time physically accurate simulation. Omniverse is comprised of multiple GPU accelerated applications and purpose-built connectors that allow Omniverse to work directly with third-party applications such as Unreal Engine from Epic Games, and popular creative tools from Autodesk, Adobe, Blender, Reallusion, Trimble, and more.

In this blog, we will go over the system requirements and the process to install Omniverse Open Beta inside a Windows 10 environment.

Read More

Topics:

PNY,

Quadro,

GPU,

NVIDIA GPU,

Pro Tip,

Quadro RTX,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

NVIDIA Omniverse,

Omniverse,

Ampere,

NVIDIA RTX A6000,

RTX A6000,

NVIDIA RTX A5000,

NVIDIA RTX A4000

Previously, we posted a 3-part PNY Pro Tip series dedicated to setting up Ubuntu 18.04 LTS for CUDA programming and ultimately accessing the NVIDIA GPU Cloud, or NGC registry to perform Deep-Learning GPU benchmarks using TensorFlow. In this Pro Tip, we review the updated process of installing the NVIDIA GPU Driver onto the latest Ubuntu LTS Desktop version 20.04 LTS, also known as Focal Fossa.

Read More

Topics:

PNY,

Quadro,

GPU,

NVLink,

NVIDIA GPU,

4K Displays,

Pro Tip,

8K,

8K HDR,

Quadro RTX,

Quadro RTX Workstations,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

Ampere,

NVIDIA RTX A6000,

RTX A6000

NVLink was introduced with the Pascal generation graphics processors in 2016. NVLink was developed to address the bandwidth limitations which the PCI-Express data path imposed as demand for high data throughput continues to accelerate. Since its inception, NVIDIA improved the NVLink implementation and increased its bandwidth capability from a theoretical maximum of 80 GB/s bidirectional data throughput to 100 GB/s found on NVIDIA Quadro RTX 8000 and NVIDIA Quadro RTX 6000 graphics boards. With the latest Ampere architecture-based NVIDIA RTX A6000 professional graphics card, NVLink now provides up to 112GB/s of bidirectional data transfer between two connected NVIDIA RTX A6000 graphics boards.

Read More

Topics:

PNY,

Quadro,

GPU,

NVLink,

NVIDIA GPU,

4K Displays,

Pro Tip,

8K,

8K HDR,

Quadro RTX,

Quadro RTX Workstations,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

Ampere,

NVIDIA RTX A6000,

RTX A6000

On December 15, 2020, the sophisticated new NVIDIA® RTX™A6000 Workstation graphics board became available to professionals that require the fastest GPU designed specifically to accelerate professional applications and workflows. Architects, Artists, Designers, Engineers and Scientists can now purchase this graphics board for their workstations and enjoy up to twice the performance for doing AI training or graphic rendering compared to the still powerful previous generation NVIDIA Quadro RTX 6000.

Read More

Topics:

PNY,

Quadro,

GPU,

NVIDIA GPU,

4K Displays,

Pro Tip,

8K,

8K HDR,

Quadro RTX,

Quadro RTX Workstations,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

Ampere,

NVIDIA RTX A6000,

RTX A6000

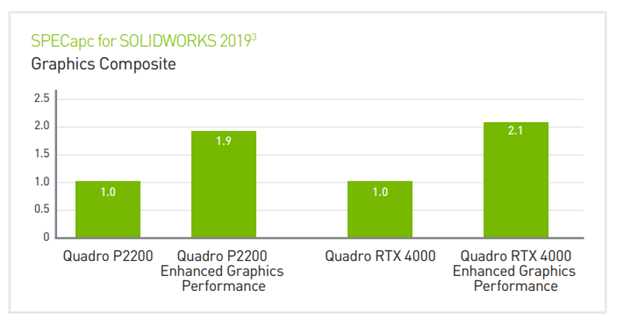

SOLIDWORKS is a computer-aided design (CAD) and computer-aided engineering (CAE) application from Dassault Systèmes. This highly versatile and capable software lets designers quickly sketch out ideas, experiment with different features, and produce models or detailed engineering drawings.

In SOLIDWORKS 2019, Dassault Systèmes introduced an experimental feature that offloads additional rendering operations from the CPU to powerful NVIDIA® GPUs. It’s called “Enhanced graphics performance” under the “Performance” sub-menu. By leveraging NVIDIA Quadro’s Open GL 4.5 hardware acceleration, this setting adds significant improvement during pan, zoom and rotate in the part or assembly environment. The performance scales up with higher end graphics cards, so it is ideally suited to High-end and beyond NVIDIA Quadro® RTX™ and NVIDIA RTX professional graphics products.

Read More

Topics:

Quadro,

GPU,

NVIDIA GPU,

4K Displays,

Pro Tip,

8K,

8K HDR,

Quadro RTX,

Quadro RTX Workstations,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

Quadro View,

RTX Voice

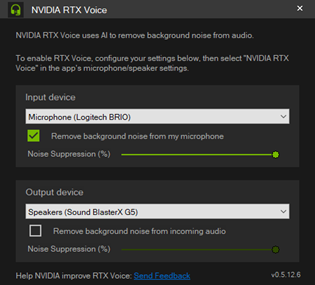

During this unprecedented period of Covid-19, many of us have found ourselves transitioning from the familiar bustling office environments to our not so quiet-and-quaint home settings. We are now sharing our office spaces not with our professional coworkers, but with family members and pets. Our homes are also not as insulated from the exterior as commercial office buildings. Street noises, and friendly neighbors doing their yard work are new challenges to our daily office lives.

These changes make it difficult to participate in online meetings quietly, forcing attendees to constantly switch between mute and unmute during meetings to prevent unwanted noises leaking into the conversations.

NVIDIA RTX Voice is a stand-alone application currently in beta phase that leverages the Tensor Cores found in the Turing RTX graphics cards and powerful neural network algorithms to remove background noise distractions from your audio input. RTX Voice can also help to remove the background noises coming from other meeting participants, greatly making the meeting conversation easier to understand.

Read More

Topics:

Quadro,

GPU,

AI,

NVIDIA GPU,

Multi GPU,

4K Displays,

Pro Tip,

8K,

8K HDR,

Quadro RTX,

Quadro RTX Workstations,

Quadro RTX GPUs,

News,

NVIDIA Quadro Solutions,

Quadro View,

RTX Voice

Your system’s NVIDIA GPU driver is a key performance and system stability component. NVIDIA drivers have long been recognized as the gold standard with great up-to-date features and new capabilities for its NVIDIA Quadro and GeForce graphics boards. NVIDIA recently released enhanced drivers for NVIDIA Quadro and GeForce GPUs that implement exciting new features for professionals and enthusiast gamers.

Read More

Topics:

PNY,

NVIDIA,

GeForce,

NVIDIA Quadro,

3ds Max,

Autodesk,

NVIDIA Quadro GPUs,

Pro Tip,

Quadro RTX,

Driver,

Chaos V-Ray,

Blender,

Quadro RTX GPUs,

NVIDIA Quadro Solutions,

3D Rendering,

Game Ready,

Studio

Working alongside your peers in an office environment promotes collaboration and catalyzes amazing results, particularly when you’ve focused so deeply on a particular issue or project that you miss other issues or potentially brilliant ideas hidden within your labors. This is when multiple sets of eyes (and brains) are truly beneficial to your overall success.

Currently many of us are working from home and sharing ideas is a choke point unless you can easily share your screen to promote collaboration, gather inputs, and realize new ideas. If you’re an NVIDIA Quadro professional graphics card owner, an exciting new tool, the NVIDIA Quadro Experience, is a brilliant new solution to recording and sharing the best ideas originating on your desktop with others under Microsoft Windows 10.

You can learn more about NVIDIA Quadro Experience program and download your own copy by visiting the following link:

https://www.nvidia.com/en-us/design-visualization/software/quadro-experience/

Read More

Topics:

PNY,

NVIDIA,

NVIDIA Quadro,

NVIDIA Quadro GPUs,

Pro Tip,

Quadro RTX,

NVIDIA Turing Architecture,

Quadro RTX GPUs,

NVIDIA Quadro Solutions,

Quadro Experience

If you are one of the millions of creative and technical professionals that rely on NVIDIA® Quadro® graphics cards in your office workstation, but find yourself working from home during the COVID-19 pandemic on your personal workstation or professional PC, then here are three reasons why you should utilize a system with an NVIDIA Quadro graphics card while working from home.

Read More

Topics:

PNY,

NVIDIA,

NVIDIA Quadro,

Pro Tip,

Quadro RTX,

NVIDIA Turing Architecture,

Quadro RTX Workstations,

Quadro RTX GPUs,

NVIDIA Quadro Solutions,

Quadro Experience,

Work From Home

Modern GPUs have the ability to connect multiple displays to a single graphics card. Multi-screen environments increase the impact of visual imaging across commercial environments, and enhance enthusiast home gaming environments.

Technologies such as NVIDIA Surround and AMD Eyefinity offer the ability to bind multiple displays together in software to create a massive virtual display. However, Surround and Eyefinity are both consumer-level technologies, which come with a multitude of limitations and compromises, such as support for only 3 to 6 monitors, which are not suitable for creative professional, interactive digital signage, live events, security, or industrial applications.

Read More

Topics:

NVIDIA,

NVIDIA Quadro,

Immersive Displays,

Pro Tip,

Quadro RTX,

NVIDIA Turing Architecture,

Artificial Intelligence,

Multi-display,

NVIDIA Mosaic,

GPU acceleration,

Quadro RTX GPUs,

Education,

NVIDIA Quadro Solutions